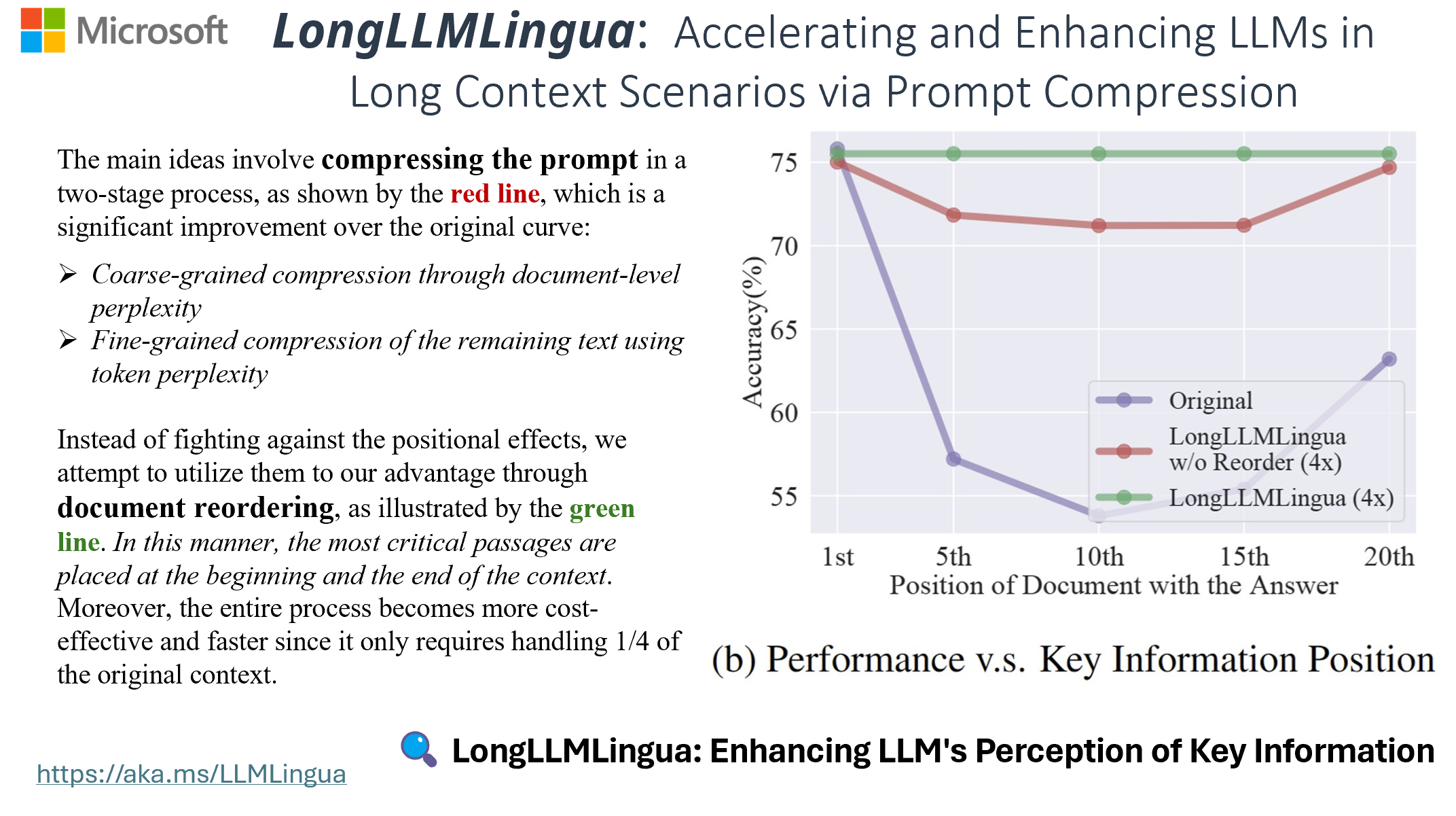

LongLLMLingua: Accelerating and Enhancing LLMs in Long Context Scenarios via Prompt Compression

Meta info.

- Authors: Huiqiang Jiang, et al.

- Paper: https://arxiv.org/pdf/2310.06839.pdf

- Affiliation: Microsoft Research

- Code: https://github.com/microsoft/LLMLingua

- References: precedent: https://arxiv.org/abs/2310.05736 (EMNLP 2023, MSR)

TL; DR

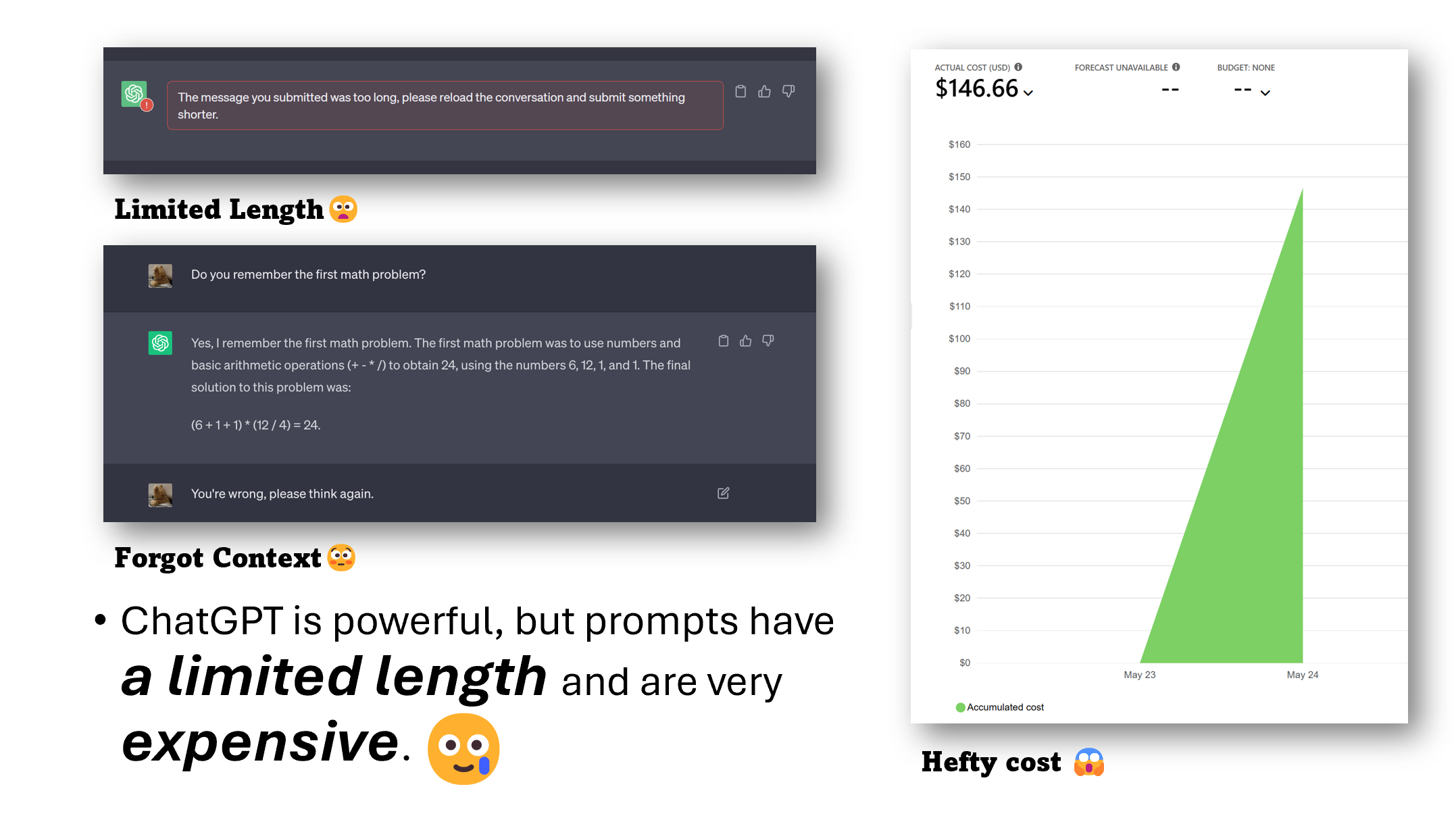

sLLM(GPT2-small, LLaMA-7B, etc. )으로 프롬프트에서 불필요한 토큰을 식별>제거(압축), LLM의 성능 손실을 최소화하면서 최대 20배의 압축 달성 가능

- precedent: LLMLingua: Compressing Prompts for Accelerated Inference of Large Language Models (EMNLP 2023, MSR)

- demo: https://huggingface.co/spaces/microsoft/LLMLingua