Knowledge Fusion of Large Language Models

Meta info.

- Authors: Fanqi Wan, Xinting Huang, Deng Cai, Xiaojun Quan, Wei Bi, Shuming Shi

- Paper: https://arxiv.org/pdf/2401.10491.pdf

- Affiliation: Sun Yat-sen Univ., Tencent AI

- Code: https://github.com/fanqiwan/FuseLLM

- Conference: ICLR2024

TL; DR

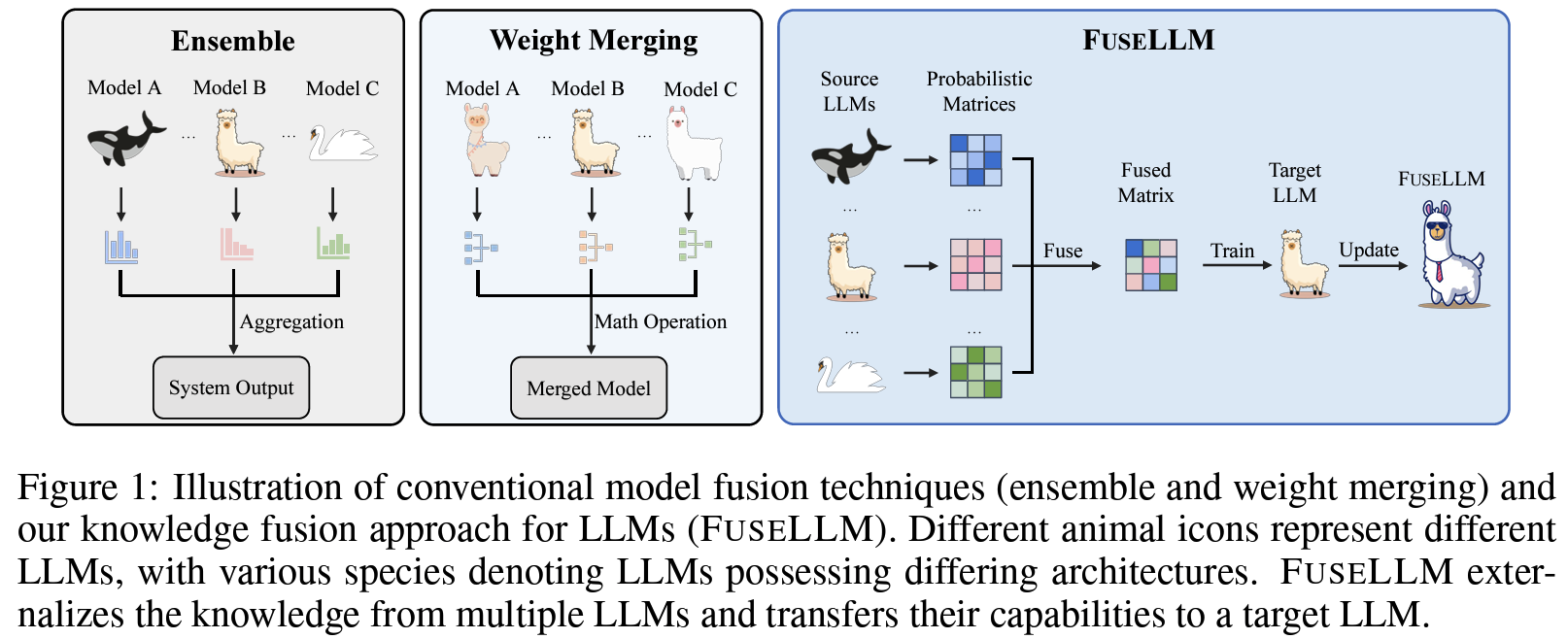

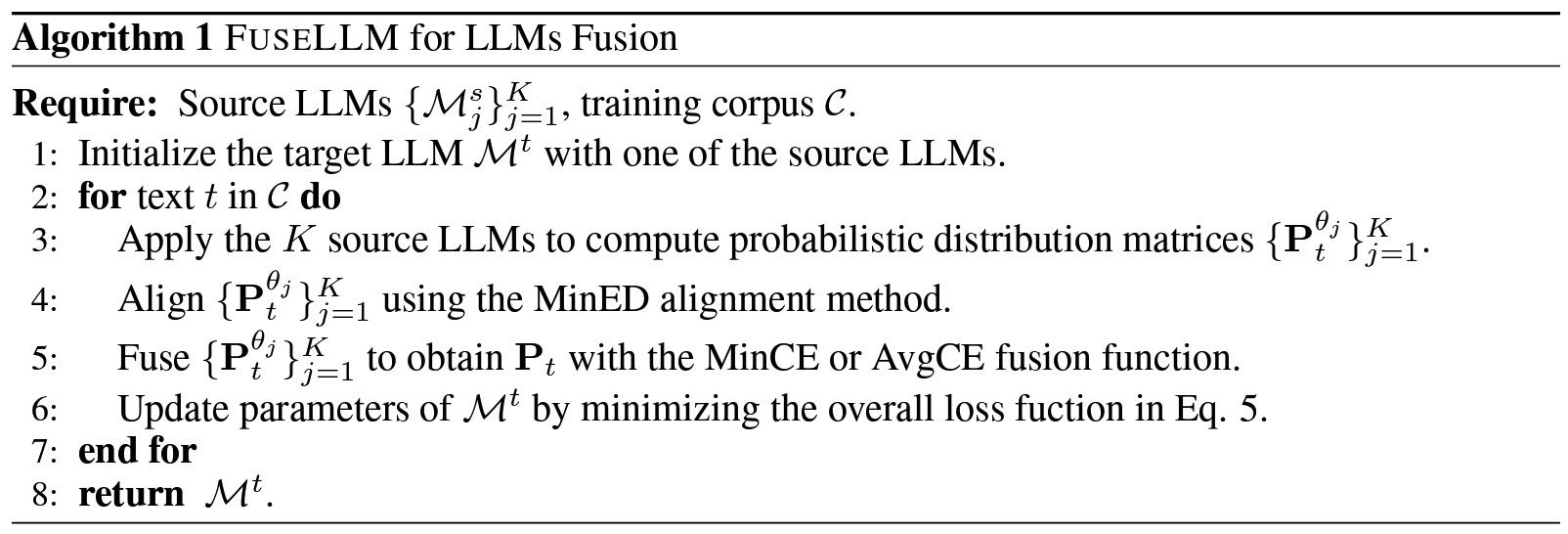

기존에 각기 다른 구조를 가지면서 다양한 방식으로 학습된 여러 LLMs(soucre LLMs)을 병합해서 더 strong하게 만드는 방법(pic1)으로, 여러 LLM의 지식을 외부화하여 그들의 capability를 새로운 LLM(target LLM)으로 transfer하는 방법을 제안(pic2)

Suggestions

- source LLMs: 각기 다른 데이터셋에 대해 개별적으로 training/fine-tuning되어 다양한 강점과 지식 기반을 가짐. 결합하기 전, 각 source LLM에 일부 데이터에 대해 prediction 시도(각 LLM이 알고 있는 게(강점이) 무엇인지 확인) 후 각 예측 평가, 가장 정확한 예측으로 LLM 학습. (next token prediction, causal language modeling objective==minimizing negative log-likelihood)

- target LLM: source LLMs를 융합해서 만드려는 LLM. 최종적으로 source 와 target 예측간 Divergence를 줄이는 것이 objective.

Effects

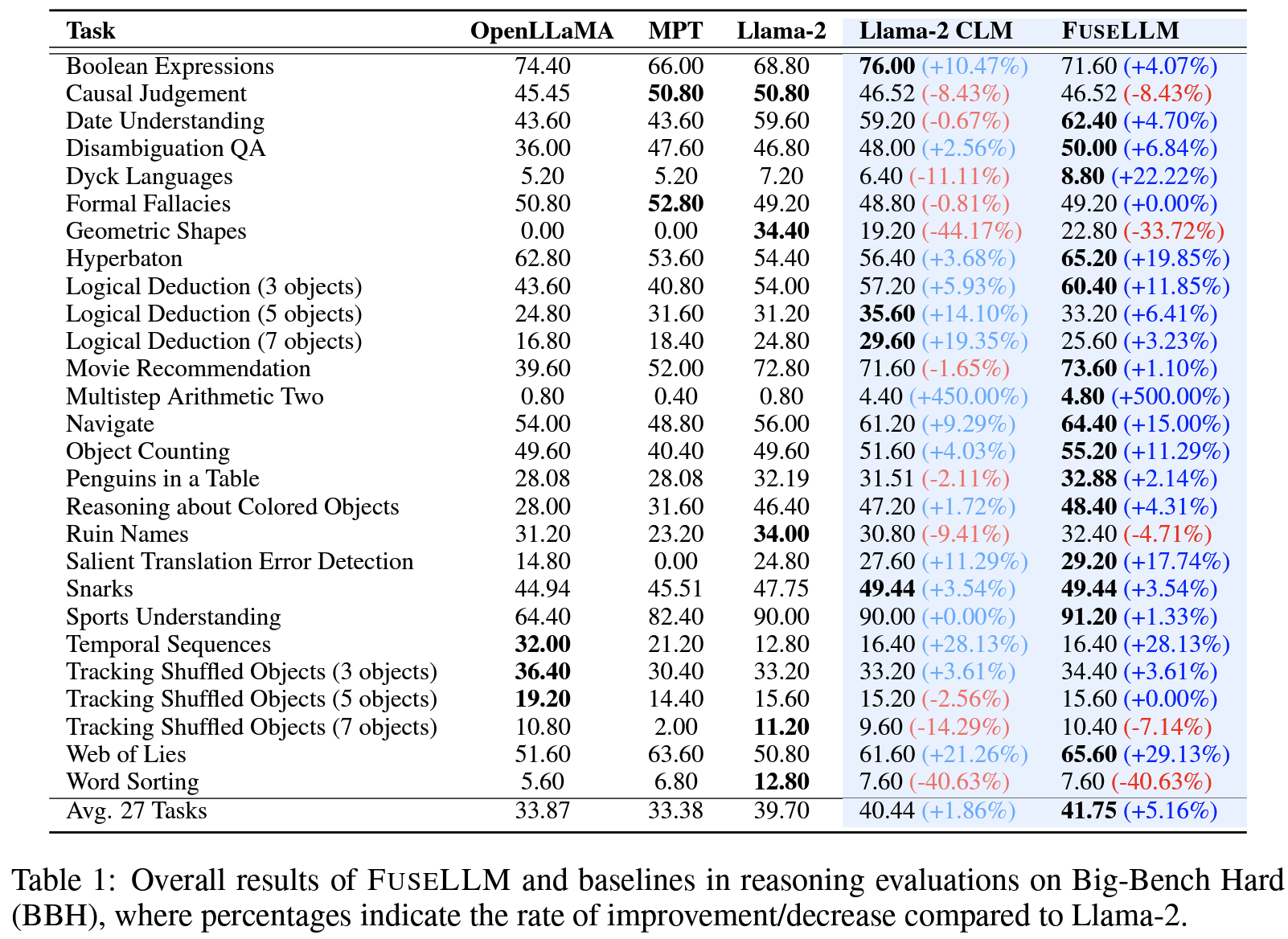

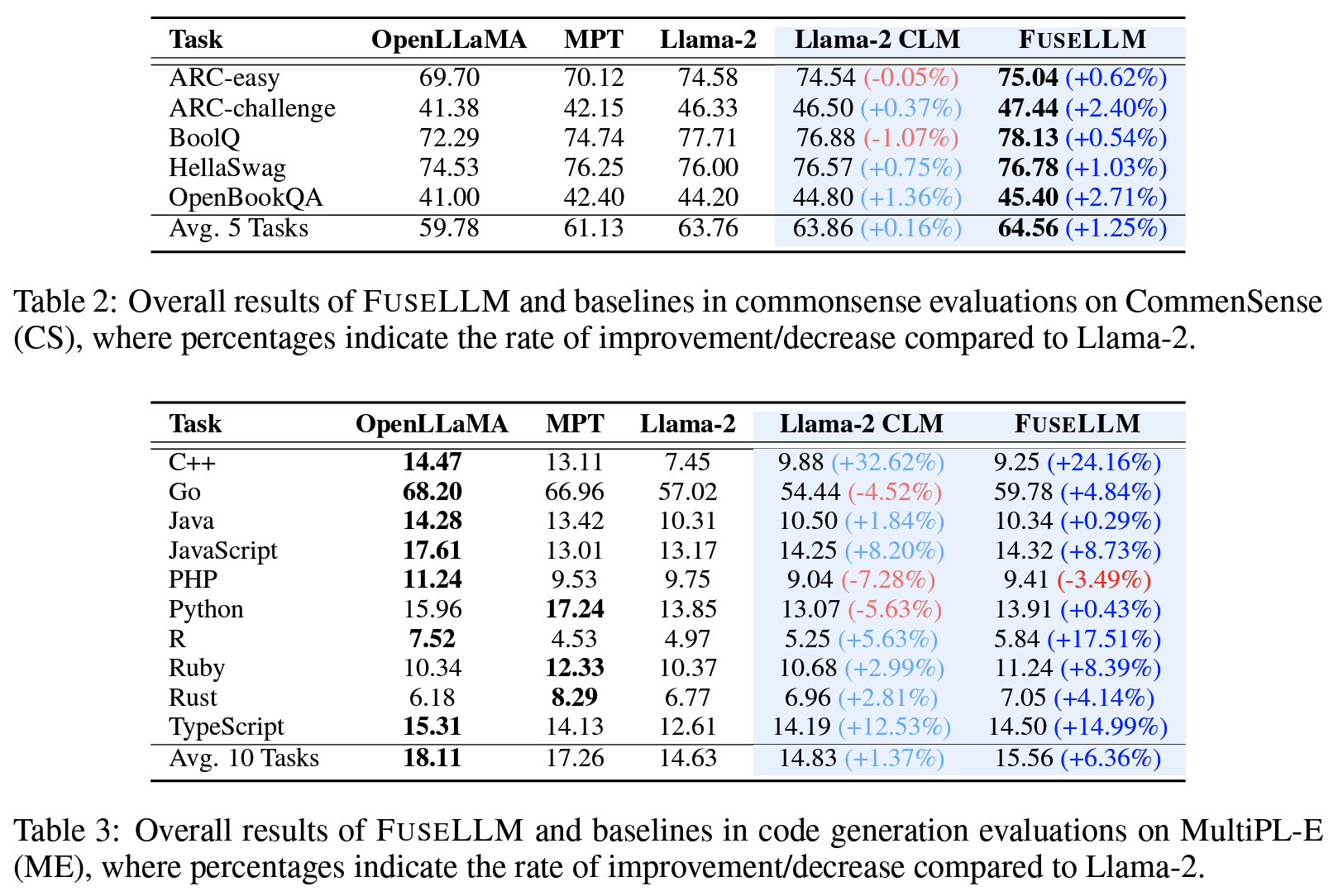

Llama-2, MPT, OpenLLaMA 사용, BBH/CS/ME 외 task에서 확인(pic3, 4), 전반적으로 제안한 FuseLLM이 평균 약 6.36%의 성능 향상.

- BBH: 대체로 FuseLLM이 (source 중 가장 나았던) Llama-2 대비 5.16% 성능 향상, 일부 Dyck Languages 등에서의 낮은 성능은 다른 source LLM의 성능이 좋지 않았거나 학습 데이터가 실제랑 밀접하게 관련되어 있지 않았을 수 있다고 분석.

- CS: 일관되게 더 나은 성능. ARC, OpenBookQA 처럼 어려운 task에서 더 큰 개선.

- ME (Code Generation): Llama-2보다 앞선 것 확인 가능하지만, 아직 개선 여지 있음.

- 다른 task들에 대해서는 appendix 참고.