ChatQA 2: Bridging the Gap to Proprietary LLMs in Long Context and RAG Capabilities

Meta info.

- Authors: Peng Xu, Wei Ping, Xianchao Wu, Zihan Liu, Mohammad Shoeybi, Bryan Catanzaro

- Paper: https://arxiv.org/pdf/2407.14482

- Affiliation: NVIDIA

TL; DR

이전 공개했던 모델(Chat QA 1.5)을 LLaMA3-70B의 context length 확장하면서 instruction following / RAG capability 향상시키는 방법 제시

Suggestions

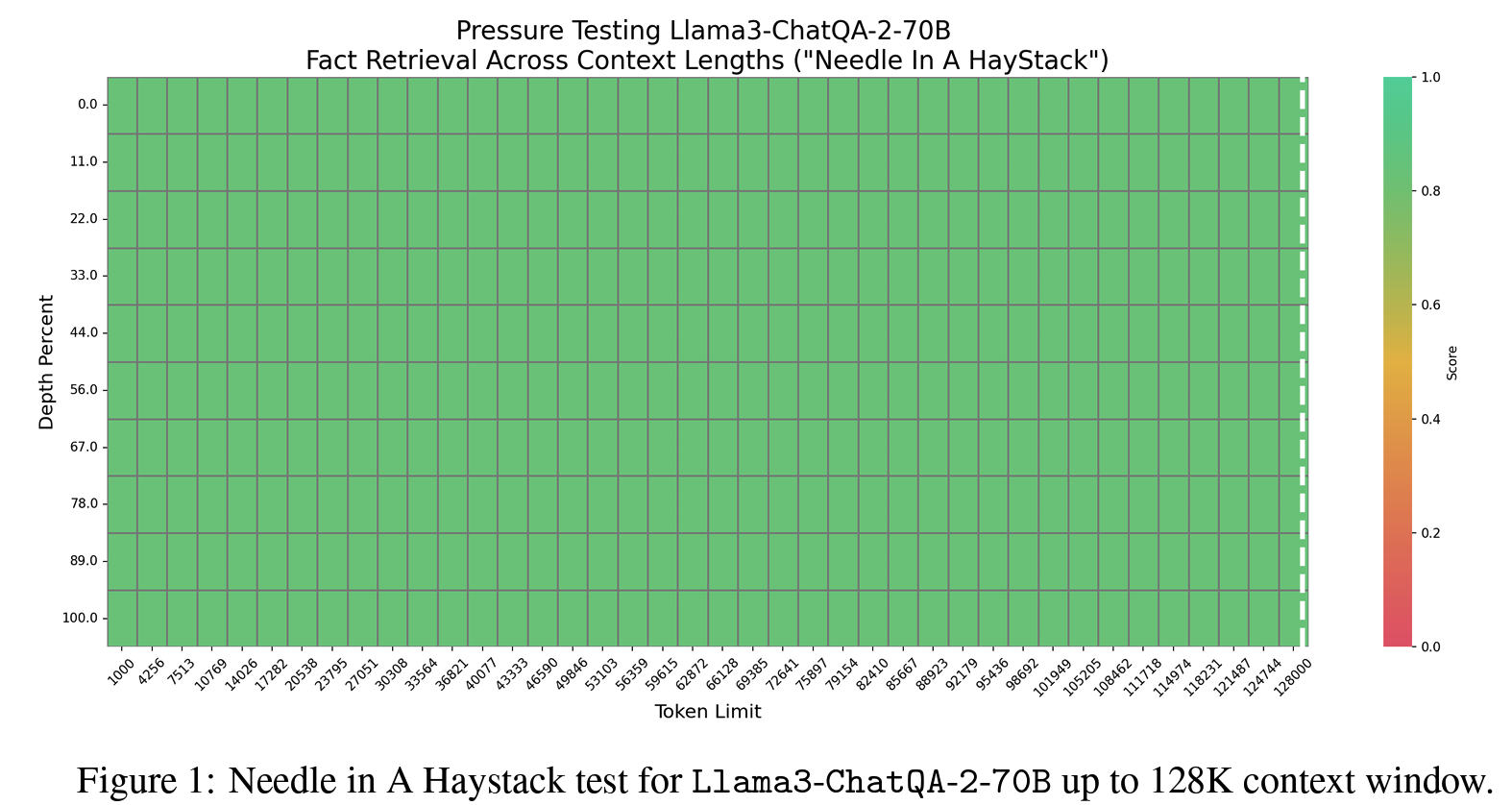

- Llama3-70B input length 8K에서 128K로 확장

- SlimPajama로 continual pretraining

- BOS, EOS에 special token 활용이 더 효과적: Llama3의

와 토큰이 사전 학습 후 모델에 이전 텍스트 청크를 무시하라는 신호를 보내기 때문, long context에 비효율

- RAG + long context 능력 향상을 위한 instruction tuning

- 1단계 128K 기준 Instruction tuning

- 2단계 context + 대화형 QA 혼합 데이터로 학습 (최대 4K input)

- 3단계 128K SFT 수집 (?)

- Long context retriever

- top-k chunk-wiser retriever 대신 long-context retriever

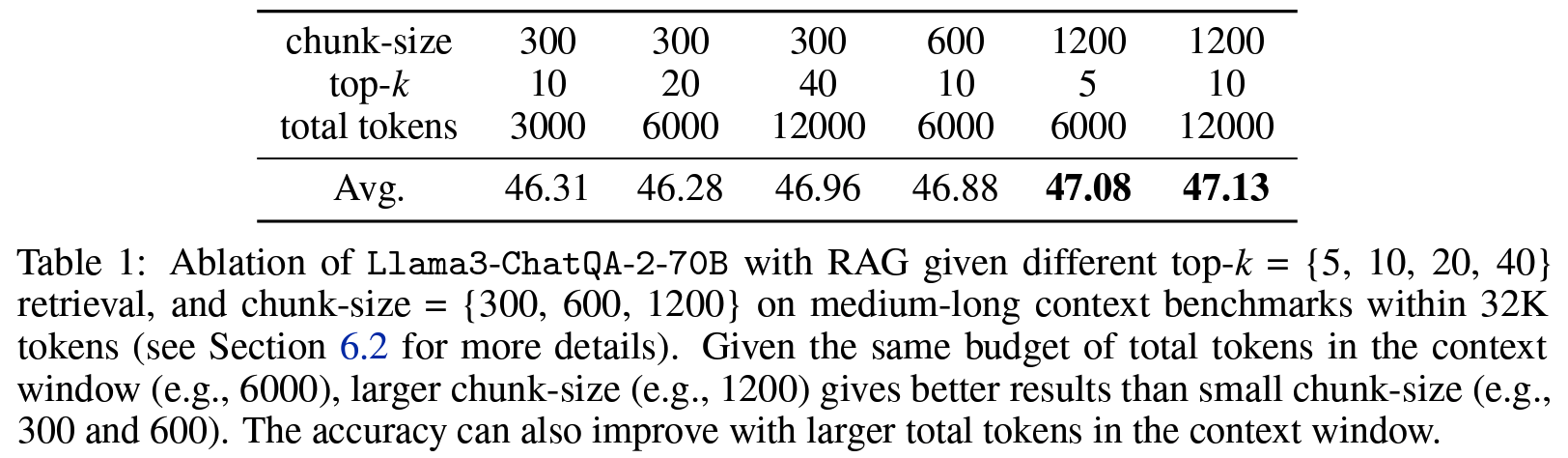

- chunk 길이는 길수록 좋았고, 비용 측면에서 총 사용 토큰 기준으로 chunk size 1200 + top-5 retrieval 전략 활용

- encoder: E5-mistral

- top-k chunk-wiser retriever 대신 long-context retriever

Effect

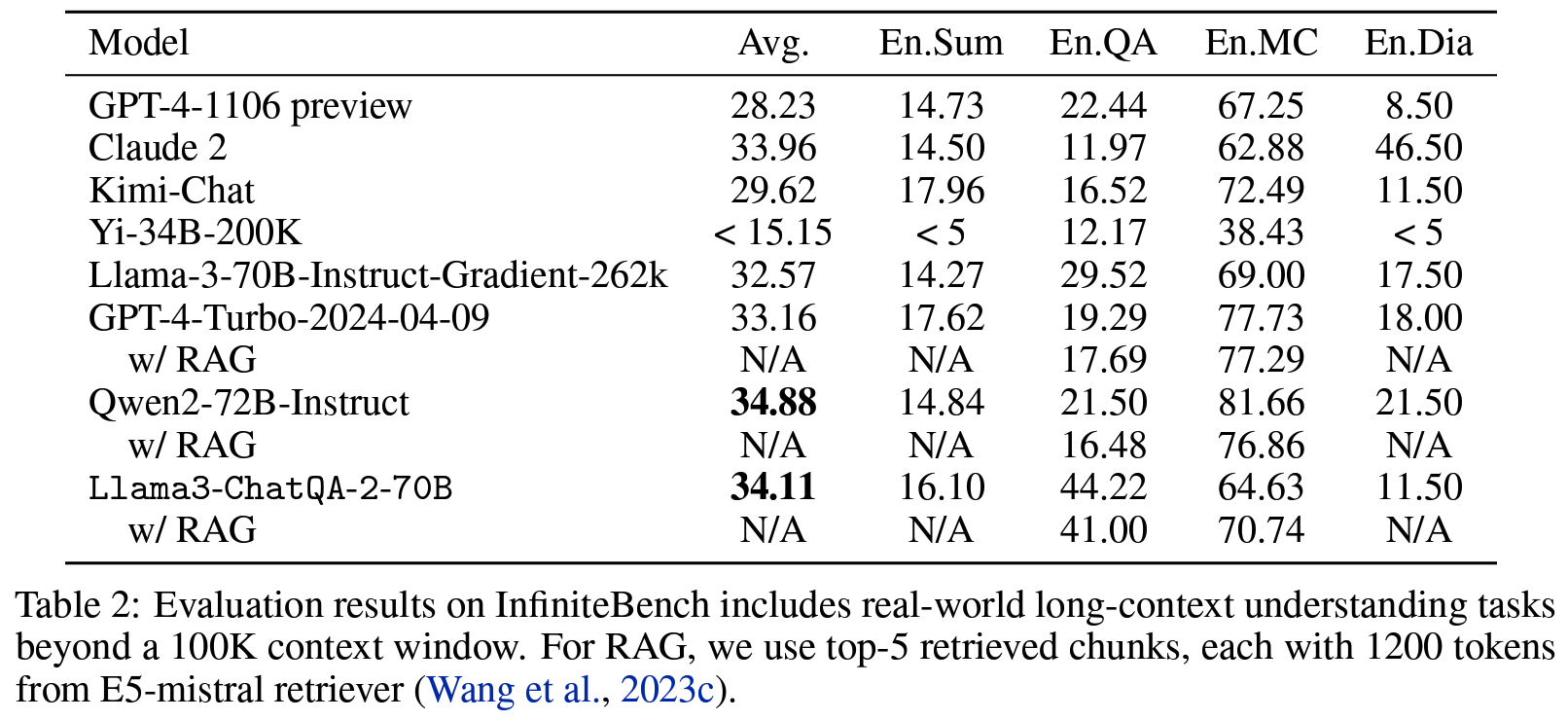

GPT-4-Turbo2024-0409와 비슷한 정확도

Personal note. technical report에 가깝기도 하고 새로운 인사이트라고 할 수 있는 부분도 크진 않긴 하지만 계속 작은 모델로 대화꼴 capacity 향상시키려는 노력을 하고 있는 것 같아서 following 해봅니다.