Adversarial Policy Optimization for Offline Preference-based Reinforcement Learning

Meta info.

- Authors: Hyungkyu Kang, Min-hwan Oh

- Paper: https://arxiv.org/pdf/2503.05306

- Affiliation: SNU

- Published: March 7, 2025

- Conference: ICLR2025

TL; DR

PbRL을 위한 적대적 선호기반 최적화 방법론 APPO 제안

Background

인간의 preference feedback활용하면 RL에서 reward design이 어렵다는 한계를 극복할 수 있더라

Problem States

human feedback이 비싸고 실시간으로(online) 받기도 어려우며, Offline PbRL에서는 보수성conservatism 확보를 위해서 신뢰구간 계산을 해주는데 계산적으로 복잡함

- Research Question: 계산 효율이랑 (confidence set 없어도) 보수성 다 챙기는 offline PbRL 없을까

Suggestion

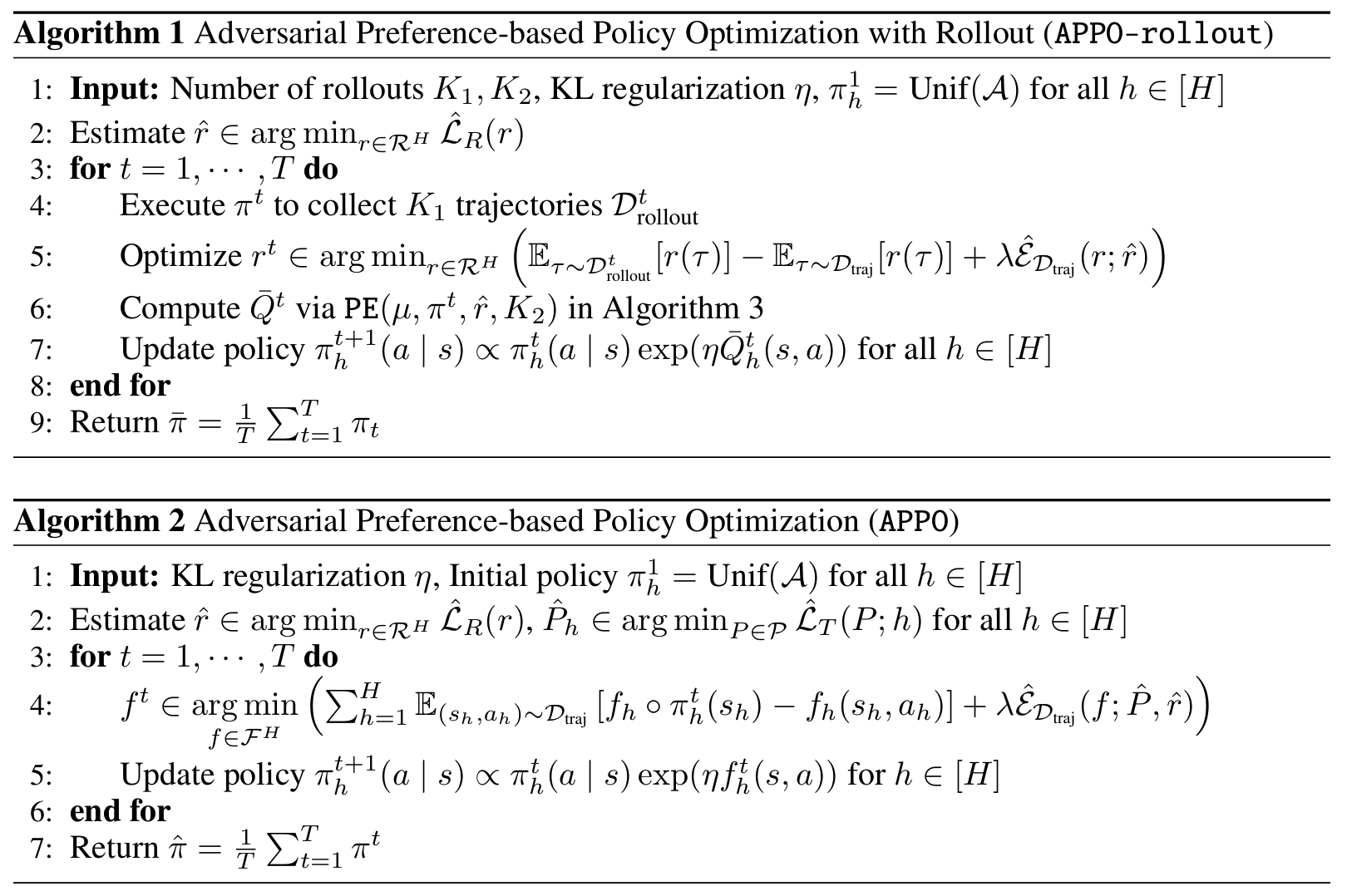

APPO

- PbRL을 정책(policy)과 reward 모델 간 2자 게임(two-player game)으로 재구성

- leader (policy model, π): maximize preference score, TRPO

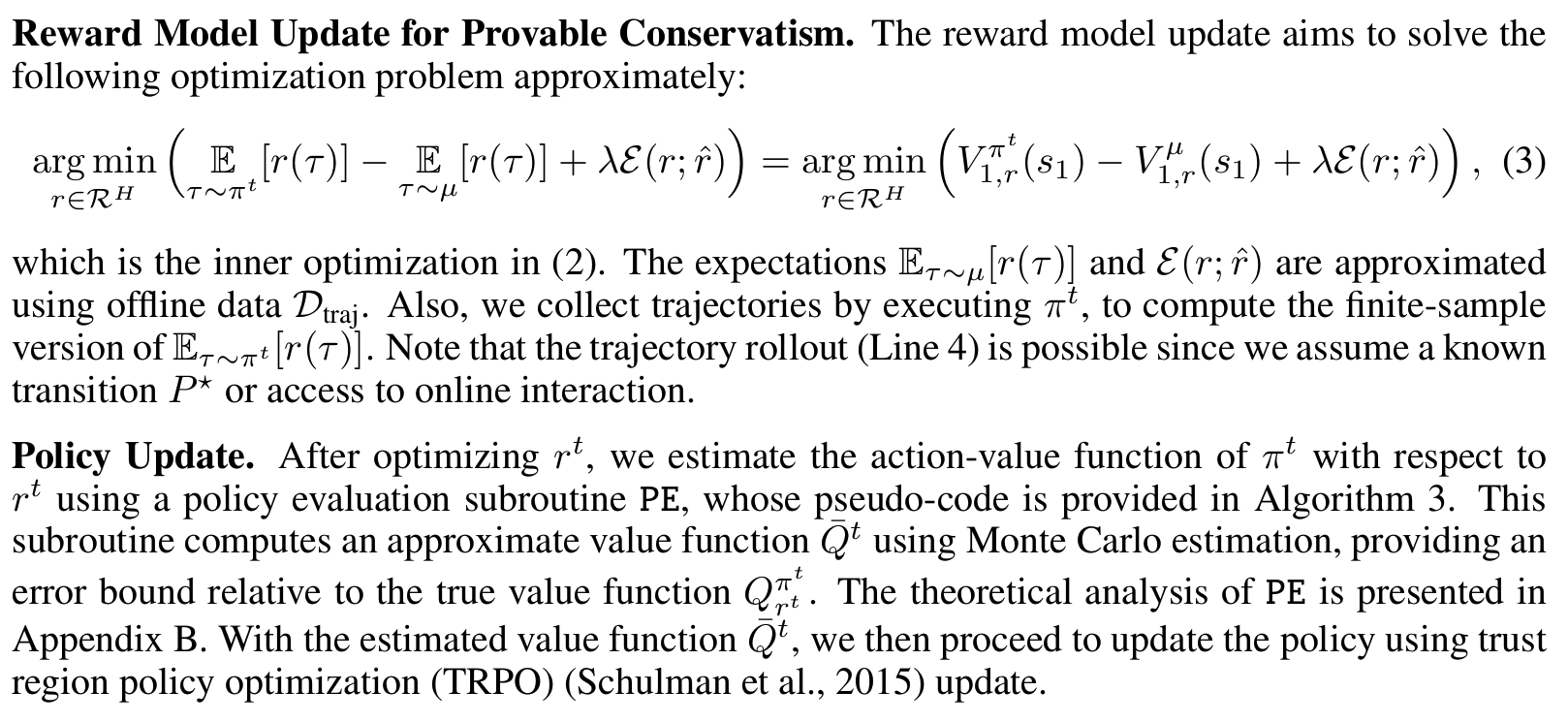

- follower (reward model, r): 보수적인 reward model을 adversarial optimization

- leader가 너무 불확실한 곳에서 탐험하지 않도록 제약

- 이론적으로 샘플 복잡도를 증명하여 기존 방법보다 계산 효율성에서 이득

- feedback 수가 적어도 optimized policy 학습 가능

Effects

실험적으로 continuous control 환경에서 최신 방법론과 비등하거나 더 나음

- target task: Meta-World Benchmark

- baseline: Markovian reward, preference transformer, dppo, ipl(inverse preference learning)

table1: # of feedback 500/1000 기준으로 아무쪼록 appo가 모든 실험에서 가장 높은 평균순위 기록

Personal note. 앞부분에 소개하는 preliminary가 충분히 친절해서 정리하는 느낌도 받아서 시작했는데 이론적인 흐름 위주라 무척 건조한 편이긴 하네요. 차근히 따라가면 모든 이론을 완벽하게 이해하지는 못했지만 하이컨셉에서 의도와 목적 결과를 이해할 수는 있었습니다.