LongLLaDA: Unlocking Long Context Capabilities in Diffusion LLMs

Meta info.

- Authors: Xiaoran Liu, Zhigeng Liu, Zengfeng Huang, Qipeng Guo, Ziwei He, Xipeng Qiu

- Paper: https://arxiv.org/pdf/2506.14429

- Affiliation: Fudan Univ., Shanghai AI Lab, Shanghai Innovation Institute

- Published: June 17, 2025

- Code: https://github.com/OpenMOSS/LongLLaDA

TL; DR

훈련할 때 본 context length를 넘어서도 Diffusion-based LLM의 "local perception" 덕분에 안정적 성능을 달성하는 LongLLaDA 제안. NTK 기반 RoPE extrapolation으로 Diffusion-based LLM의 input length를 최대 24k 토큰까지 확장, 훈련 불요.

Background

- long dependency 처리 및 reversal curse 극복 등, auto-regressive manner (Transformers)의 대안으로 Diffusion-based LLM 주목

- 아직까지는 단~중문에 대한 성능 확인, LC capacity에 대한 보고 검증 부족

- NTK-based RoPE scaling 등이 AR LLM에 대해 재학습 없이 Inference-time에서 context length 확장 가능성 확인

- RoPE scaling: rotary position embedding

- QK 내적에 위치정보로 sinusoidal 함수로 인코딩을 더해주는 것 (대부분의 AR manner에서 사용)

- 문제는 훈련된 max-length에 대해서만 작동되는 것이 한계: high dimensional sin/cos의 period가 너무 길어서 extrapolation에서 OOD 발생

- NTK-based RoPE Scaling: Neural Tangent Kernel 관점으로 RoPE period를 scaling하면 extrapolation 가능

- https://www.reddit.com/r/LocalLLaMA/comments/14lz7j5/ntkaware_scaled_rope_allows_llama_models_to_have/

- tl;dr: RoPE의 period를 줄이면 ( 작게 scale해주기 ) 학습 때 본 context length보다 더 길어도 학습된 길이처럼 속일(?) 수 있다.

- rotary base(period)가 크면 > 즉 sin/cos 함수 변화가 느림 > 먼 위치 차이 표현

- rotary base가 작으면 > 진동이 빠르고 > 짧은 거리 위치에 민감

- RoPE scaling: rotary position embedding

Problem States

D-LLM의 LC 성능은 어떠한가?

- NTK-based RoPE scaling 적용이 가능한가?

- training 없이 context length 확장이 될까?

Suggestions

LongLLaDA, AR-LLM에서 쓰던 NTK-based RoPE scaling을 Diffusion-LLM에 training-free하게 써보자

- 기존 rotary base: beta_0=500,000

- scaling factor: λ=4, 14, …

- scaling factor λ는 extrapolation 길이에 따라 설정

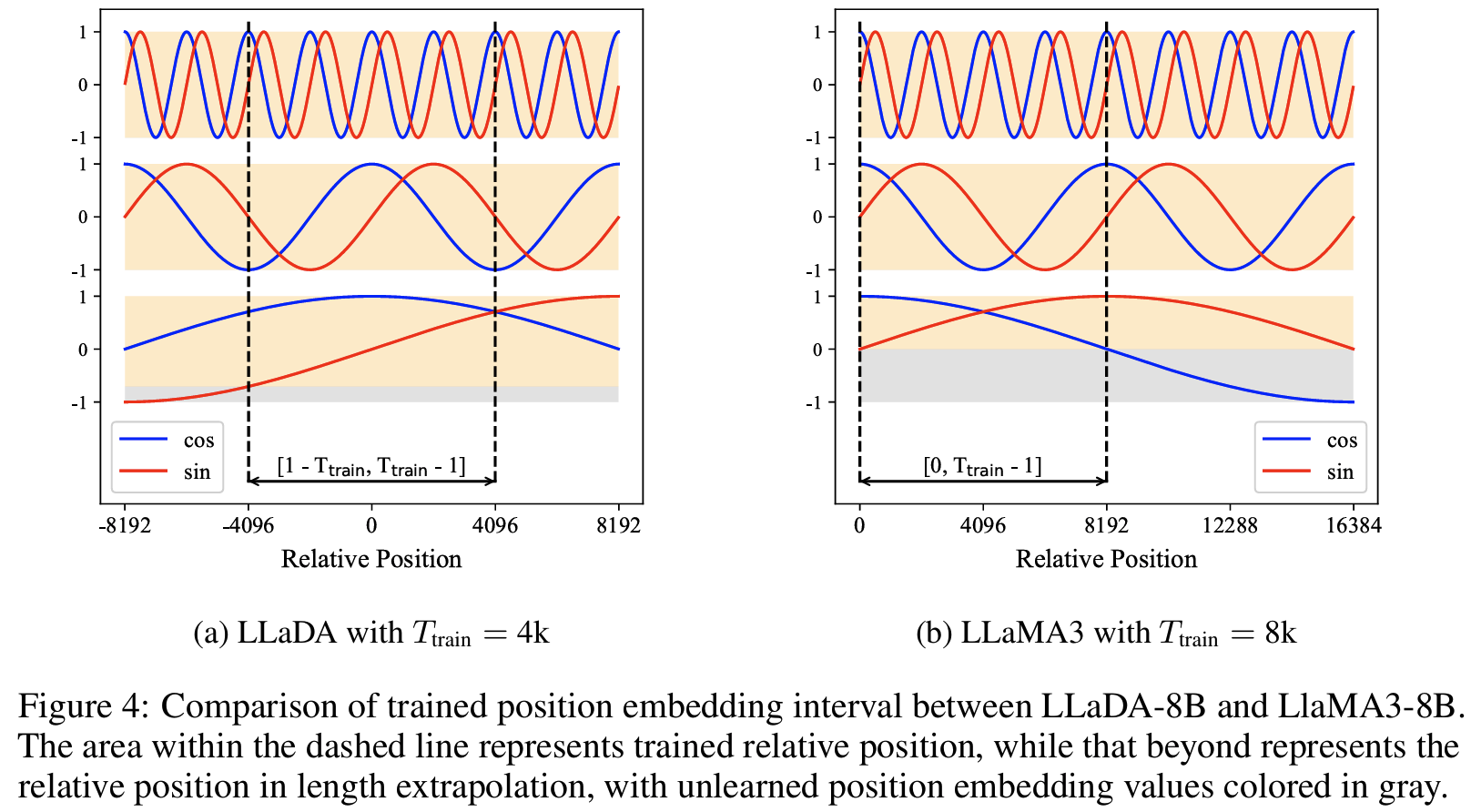

Eq. (1) - Sampling step을 늘릴수록 LLaDA의 retrieval depth 증가

Fig 3

- scaling factor λ는 extrapolation 길이에 따라 설정

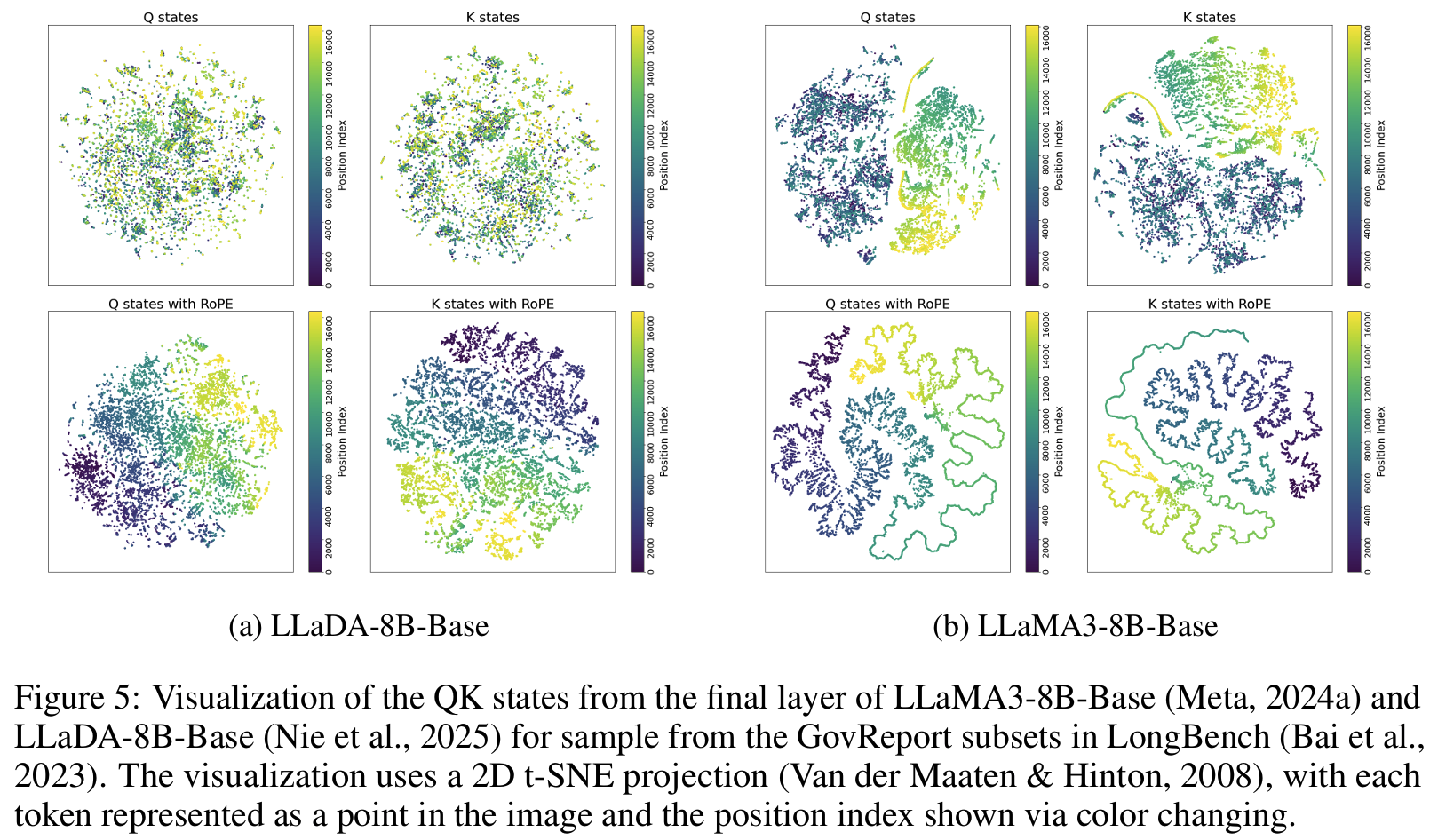

- RoPE의 sin/cos period 관점에서 AR(causal attention) 보다 OOD 영역이 더 적음 > extrapolation에 더 강건

- DLLM은 bi-directional attention구조라 position embedding이 대칭적

Fig 4(NIAH 실험Fig 3에서 후술)

- DLLM은 bi-directional attention구조라 position embedding이 대칭적

Effects

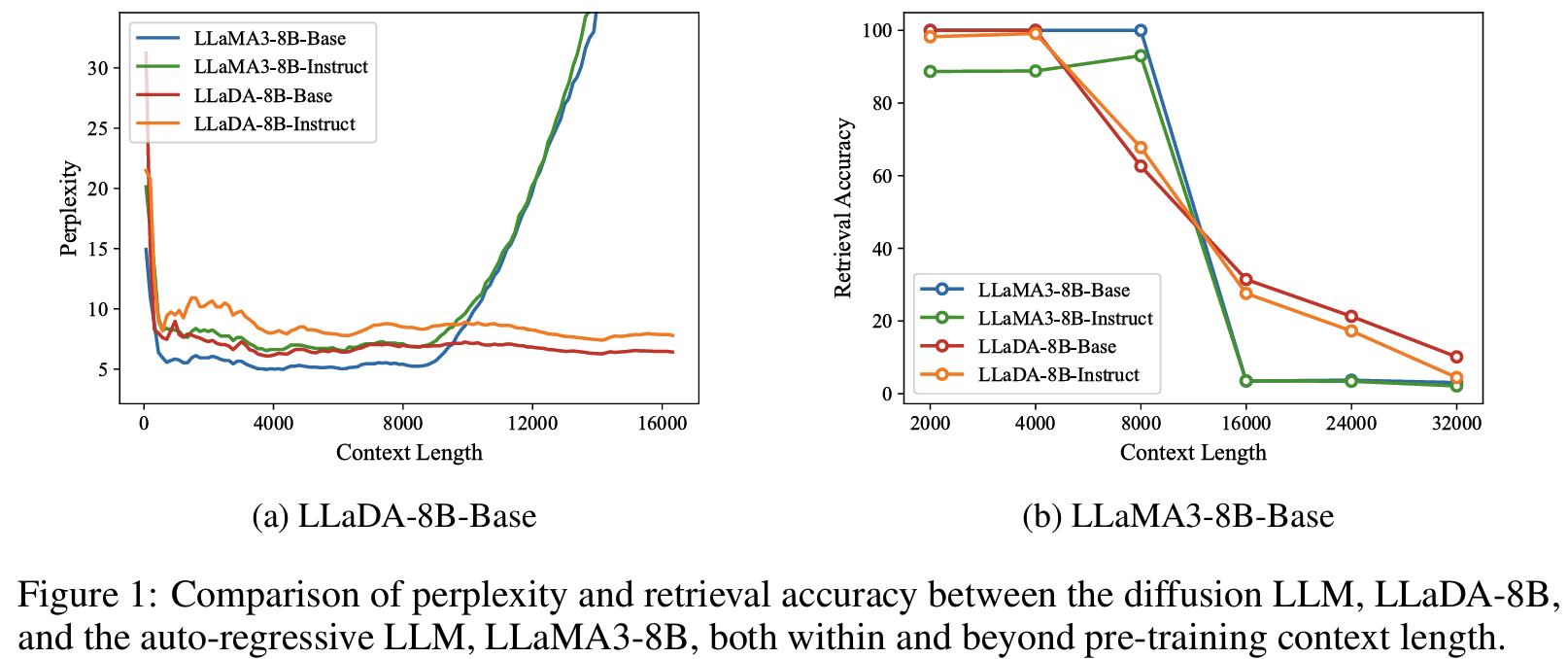

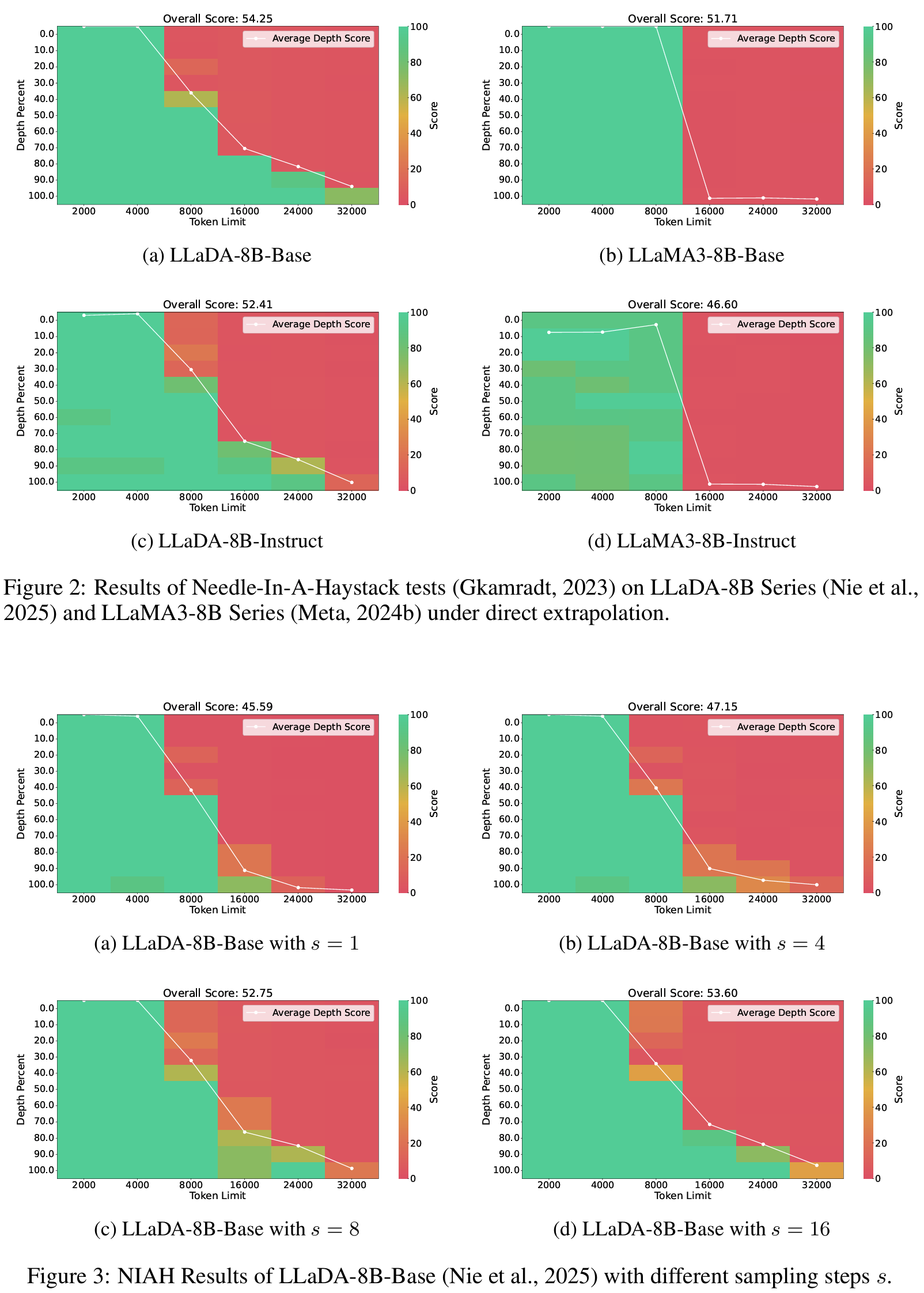

- NIAH(needle-in-a-haystack) - retrieval accuracy 확인: Diffusion 구조의 local perception 강점 확인

Fig 2-3- AR LLM은 [0, T-1] 구간만 학습 > 전체 sin/cos 곡선 중 절반만(+) 봄 > (-)는 모두 OOD라 LC 성능 급락 (NIAH 0%)

- Diffusion LLM은 [−T+1, T−1] 구간을 봄 > sin/cos의 양쪽(+/-)을 대칭적으로 관측 > LC의 앞쪽은 몰라도 뒷쪽 최신 context는 제대로 봄 (local perception, NIAH 10~25%정도는 유지)

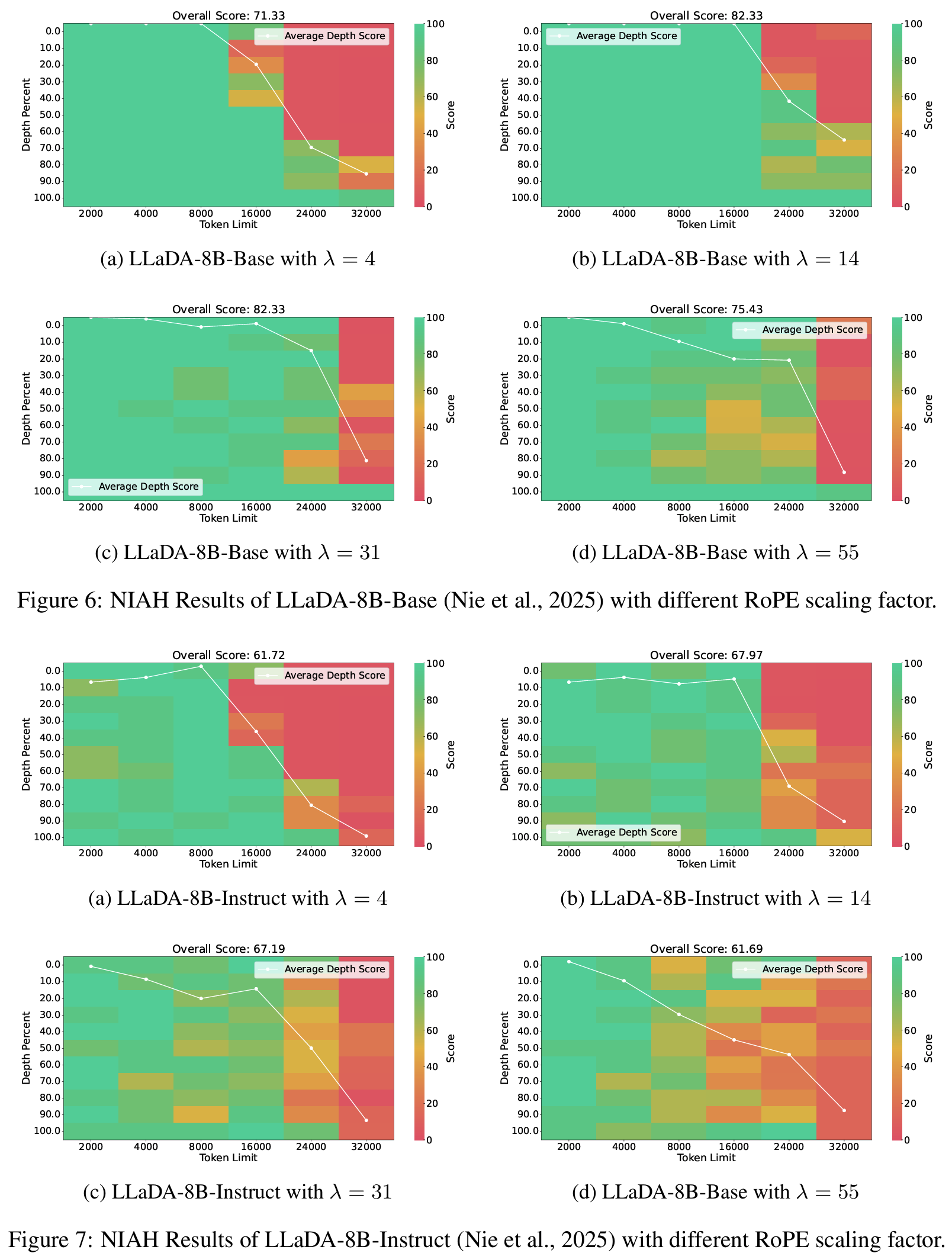

- lambda ablation

Fig 6,7 (+ 8,9,10,11,12)- λ=4, 14 (각 8K, 16K max length)에서 안정적 확장

- λ=13부터 AR처럼 lost in the middle 발생. 중간에서 context retrieve 실패

- λ=55에서는 extrapolation 실패.

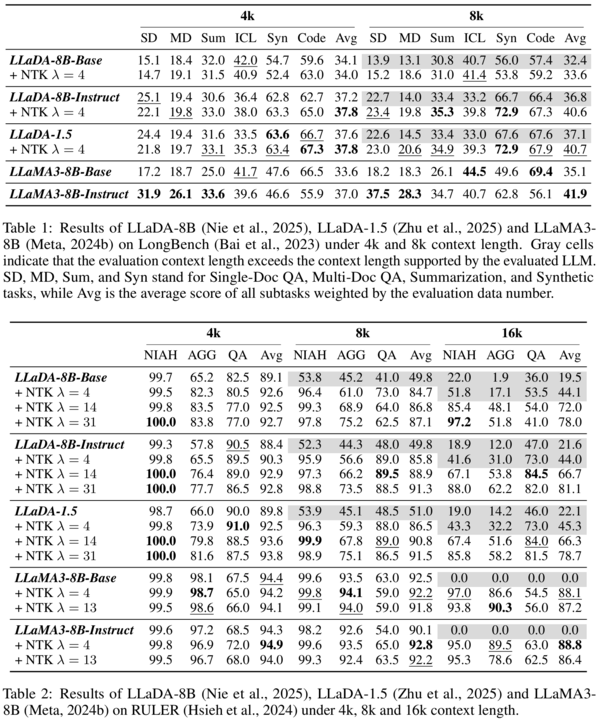

- LongBench: LLaDA는 LLaMA3와 대등, synthetic task에서는 우위. scaling 적용하면 평균 2-4점 정도는 성능 향상

Tab 1 - RULER: QA는 더 잘하지만 Aggregation(e.g., variable tracing)은 더 못하더라: AR 구조가 global info 조합에 강한 것으로 보임

Tab 2

Personal note. Diffusion LLM 계속 확인해봄직 하다 이후의 최신 연구로 보이는데, bi-directional 하다는 느낌이 BERT가 계속 떠오르긴 하네요. reddit 작성자 방식도 간단하고 납득가능하게 확장 가능성을 보인 것 같아서 흥미롭습니다.