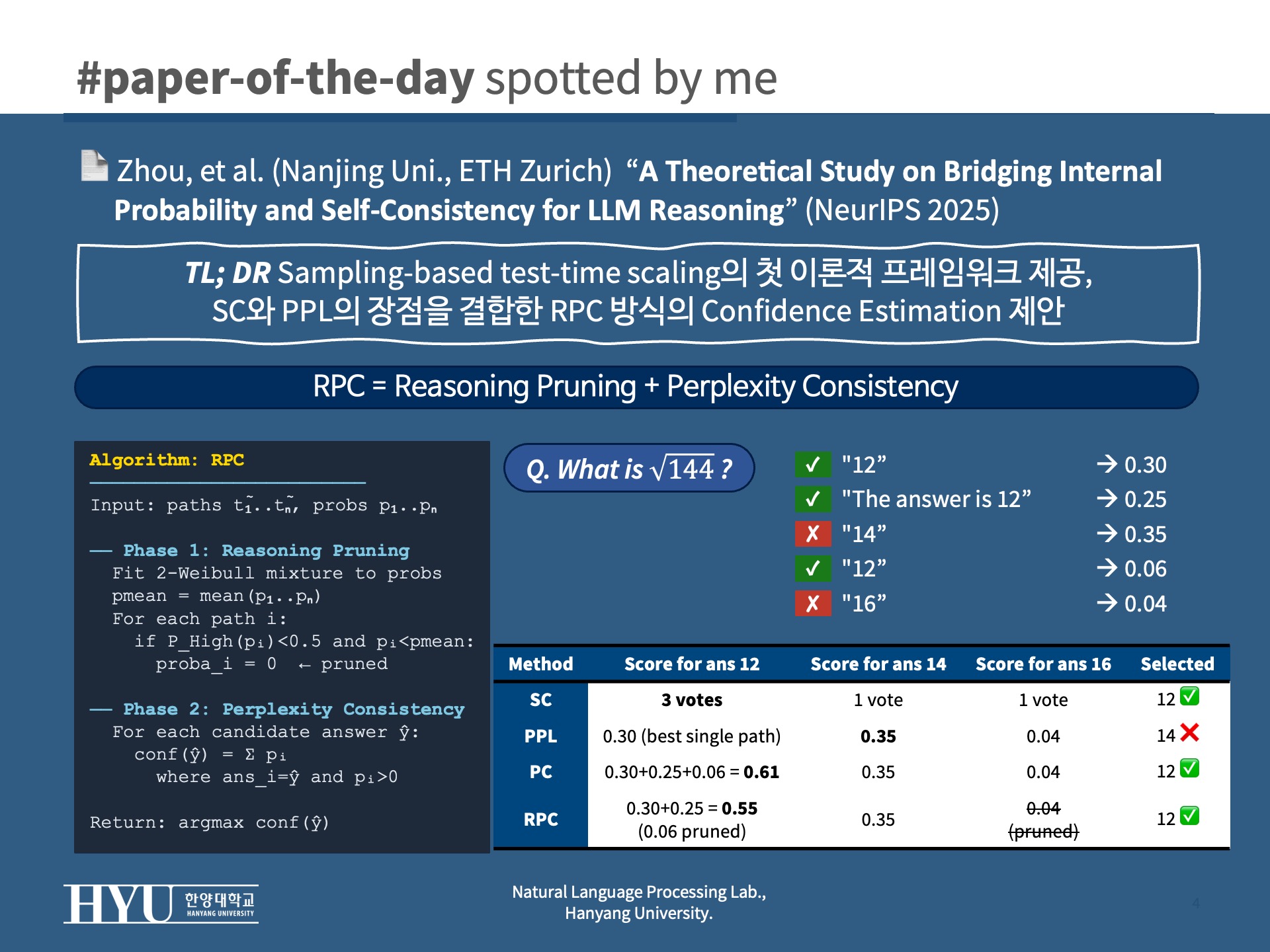

A Theoretical Study on Bridging Internal Probability and Self-Consistency for LLM Reasoning

Meta info.

- Authors: Zhi Zhou, Yuhao Tan, Zenan Li, Yuan Yao, Lan-Zhe Guo, Yu-Feng Li, Xiaoxing Ma

- Paper: https://arxiv.org/abs/2510.15444

- Affiliation: Nanjing University, ETH Zurich

- Conference: NeurIPS 2025

- Published: October 17, 2025

TL; DR

Sampling-based test-time scaling의 첫 이론적 프레임워크를 제안하고, SC와 PPL의 한계를 분석하여 두 방법의 장점을 결합한 RPC를 제안

Background

- Test-time scaling: inference 시 추가 계산을 투입해 reasoning 성능을 높이는 접근

- sampling-based: N개의 reasoning path를 생성하고, confidence 추정을 통해 Best-of-N 방식으로 최적 답 선택

- LLM sampling이 확률적이라 같은 입력에 매번 다른 출력이 나옴 → confidence estimation이 핵심

- 기존 confidence estimation의 두 가지 대표 방법

- Self-Consistency (SC): n개의 path를 샘플링해 majority vote로 confidence 추정

- log probability 불필요 → open/closed source 모두 적용 가능

- answer 단위로 equivalent path를 aggregation → model error 낮음

- 단점: error가 샘플 수에 반비례해서만 줄어듦 (linear convergence) → 샘플이 적으면 느림

- Perplexity (PPL): LLM 내부 token probability를 그대로 confidence로 사용

- log probability 필요 → open-source only

- 각 path를 독립적으로 평가 → model error 높음

- error 수렴은 exponential로 빠르지만, probability가 0에 가까운 어려운 문제에서 linear로 degrade

- Self-Consistency (SC): n개의 path를 샘플링해 majority vote로 confidence 추정

- 두 방법 모두 실제로 잘 동작하지만, 왜 잘 되는지, 어떻게 개선해야 하는지의 이론적 기반이 없었음

Problem States

- SC의 문제: error가 샘플 수에 선형으로만 줄어듦 → 샘플 budget이 제한된 상황에서 충분한 성능 달성 어려움

- PPL의 문제

- 같은 답을 다른 표현으로 쓴 두 path가 다른 score를 받음 → aggregation 부재로 model error 큼

- probability가 작은 어려운 문제일수록 exponential 수렴 이점이 사라지는 degeneration issue 존재

- Research Question: PPL의 빠른 수렴 + SC의 낮은 model error를 동시에 달성할 수 있는가?

Suggestions

이론적 프레임워크: Reasoning Error Decomposition

- 핵심 아이디어: reasoning error를 두 독립적인 항으로 분해

- Estimation Error: 추정된 confidence와 실제 confidence 사이의 차이 → 샘플 수와 estimation 전략으로 제어 가능

- Model Error: 실제 confidence와 정답 여부 사이의 차이 → LLM 자체 능력에 의존, 샘플 수와 무관

- SC 분석 (Proposition 2)

- Estimation Error = Bernoulli 분산 → linear convergence: 샘플을 두 배 써야 error가 절반

- Model Error = answer-level aggregation 덕분에 낮게 유지

- PPL 분석 (Proposition 3)

- Estimation Error = exponential convergence: 샘플이 조금만 늘어도 error가 빠르게 줄어듦

- 단, path probability가 0에 가까울수록 exponential 이점이 사라지고 linear 수준으로 degrade

- Model Error = path 단위 평가로 SC보다 높음 (이론적으로 증명)

| Method | Estimation Error 수렴 | Model Error | 핵심 문제 |

|---|---|---|---|

| SC | Linear | 낮음 | 샘플 수 많이 필요 |

| PPL | Exponential (degrade 있음) | 높음 | 어려운 문제에서 degrade |

| RPC | Exponential | 낮음 | — |

Method: RPC (Reasoning-pruning Perplexity Consistency)

- RPC: 두 개의 sequential component로 구성된 post-hoc confidence estimation 방법

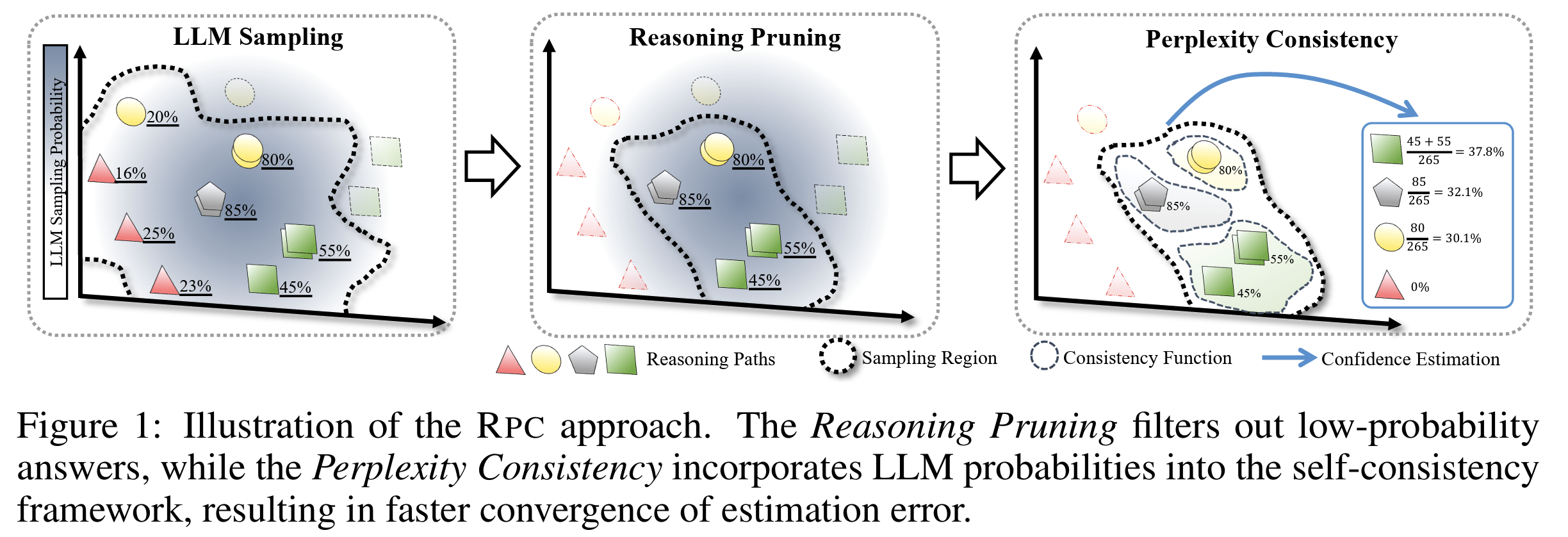

Component 1: Perplexity Consistency (PC)

- 아이디어: PPL처럼 내부 확률을 쓰되, SC처럼 같은 답을 내는 path들의 확률을 합산

- 같은 답

ŷ를 생성한 모든 sampled path의 확률을 더해 해당 답의 confidence로 사용 Confidence(ŷ) = Σ p(t̃|x)for all retained paths whereg(t̃) = ŷ

- 같은 답

- 효과 (Theorem 4)

- Estimation Error: SC처럼 answer-level aggregation을 유지하면서도 PPL처럼 exponential convergence 달성

- Model Error: SC와 동일 수준으로 낮게 유지

- 단, path probability가 극도로 낮은 경우 여전히 degeneration 발생 가능 → RP로 해결

Component 2: Reasoning Pruning (RP)

- 아이디어: 모델 스스로 near-zero probability를 부여한 path는 정답일 가능성이 낮으므로, PC 실행 전에 미리 제거

- 자동 threshold 결정: sampled path들의 probability 분포를 2-component Weibull mixture로 모델링

- 분포를 high-probability 영역과 low-probability 영역으로 자동 분리

P_High < 0.5이면서 전체 mean보다 낮은 probability를 가진 path를 pruning- threshold를 수동으로 설정할 필요 없는 hyperparameter-free 방식

- 효과 (Theorem 7): optimal threshold 사용 시 높은 확률로 optimal error reduction 달성 보장

- noise path 제거 → PC의 degeneration 문제 해소

- incorrect path 중 low-probability인 것들이 제거되면서 model error도 함께 감소

전체 알고리즘

- Phase 1 (RP): Weibull mixture 피팅 → low-probability path 제거

- Phase 2 (PC): 남은 path들에 대해 answer별 확률 합산 → 가장 높은 confidence의 답 선택

- 추가 overhead: MathOdyssey 128-sample 기준 SC 0.006s/q → RPC 0.036s/q (LLM inference 대비 무시 가능)

Effects

- Experimental Setup

- Models: InternLM2-Math-Plus 1.8B/7B, DeepSeekMath-RL 7B, DeepSeek-Coder 33B, DeepSeek-R1-Distill-Qwen-7B

- Datasets: MATH, MathOdyssey, OlympiadBench, AIME (수학 4종), HumanEval, MBPP, APPS (코드 3종), GPQA, LogiQA

- Baselines: PPL, SC, Verbalized Confidence (VERB)

- Metrics: Accuracy ↑, ECE (Expected Calibration Error) ↓, sampling budget ↓

- 각 실험 10 random seed로 반복, A800/H800 GPU

- Results

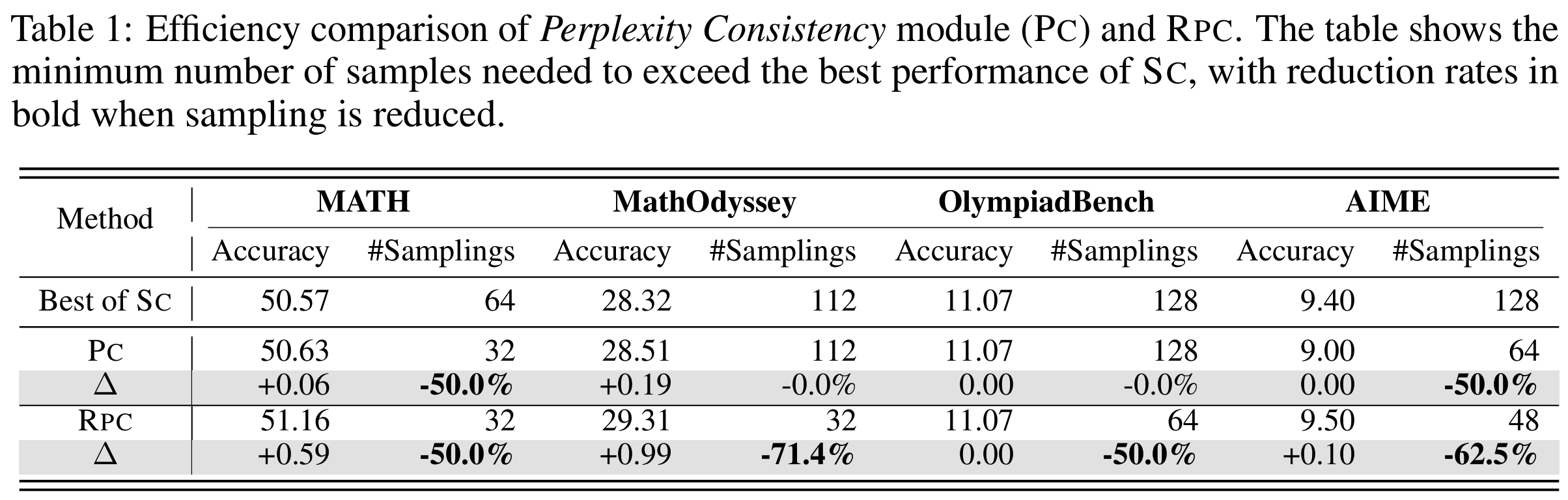

- RQ1 (Efficiency): Table 1 — SC의 best 성능을 달성하는 데 필요한 최소 샘플 수 비교

- RPC는 4개 수학 benchmark 전체에서 SC 대비 50~71% 샘플 절감하면서 동등 이상 성능 달성

- MathOdyssey: 112개 → 32개 (-71.4%)로 가장 큰 절감 폭

- PC만으로는 degeneration으로 일부 절감 실패; RP 추가 시 전 dataset에서 일관된 개선

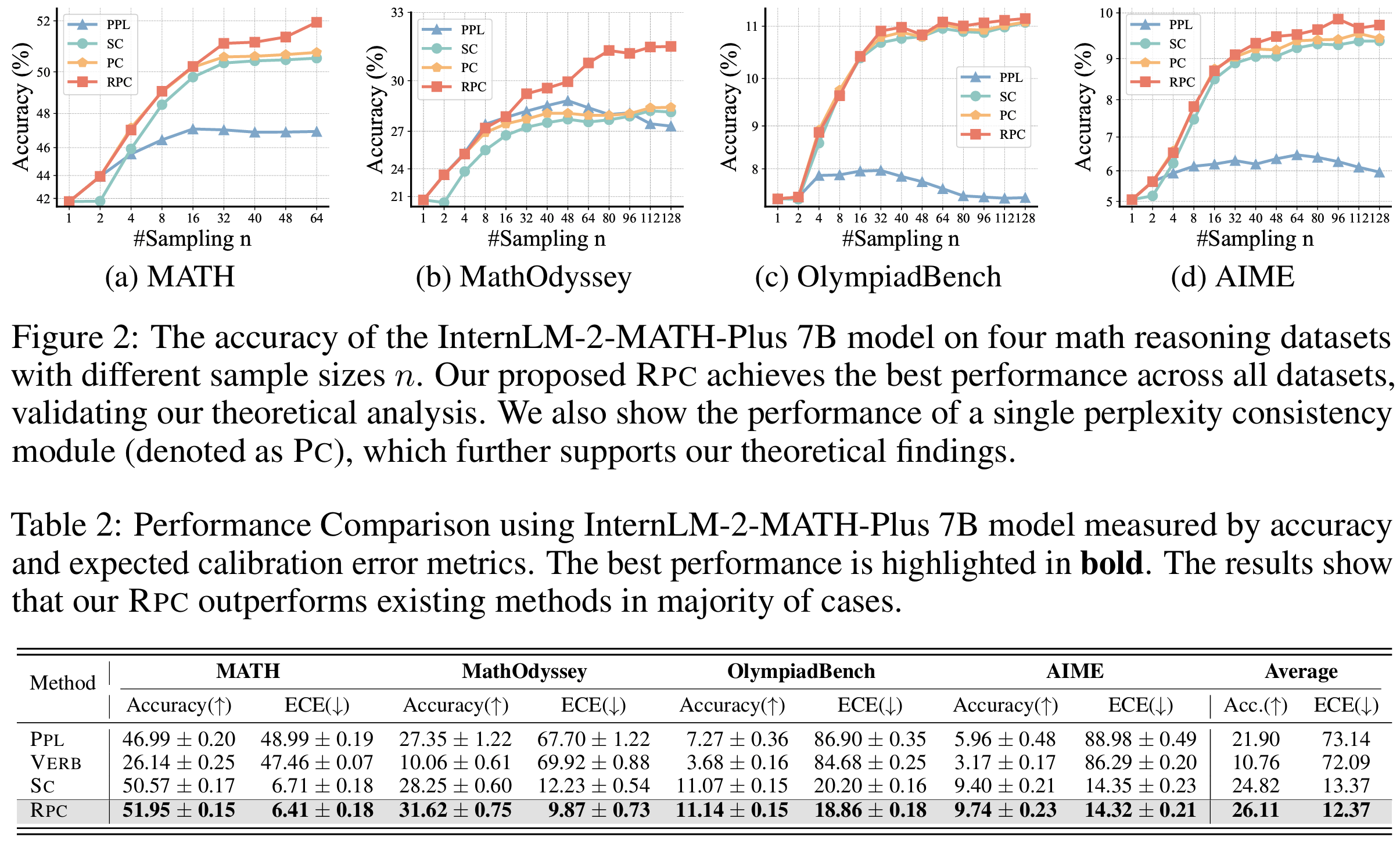

- RQ2 (Efficacy): Figure 2 — 샘플 수에 따른 accuracy 변화 곡선

- 모든 샘플 예산 구간에서 RPC > PC > SC > PPL 순서 일관

- PPL은 model error 높아 early plateau; RPC는 이를 피하면서 accuracy ceiling도 높음

- Table 2 (InternLM2-Math-Plus 7B) 평균: RPC 26.11% / SC 24.82%

- Table 3에서 1.8B 및 DeepSeekMath-RL 7B에서도 동일한 경향 확인

- RQ3 (Reliability): Table 2 — Accuracy + ECE 동시 비교

- PPL: ECE 평균 73.14 → 완전히 miscalibrated

- VERB: accuracy도, ECE도 모두 최하위

- SC: ECE 13.37로 reasonable하지만 RPC에 미치지 못함

- RPC: accuracy 26.11% + ECE 12.37 → accuracy, calibration 모두 최고

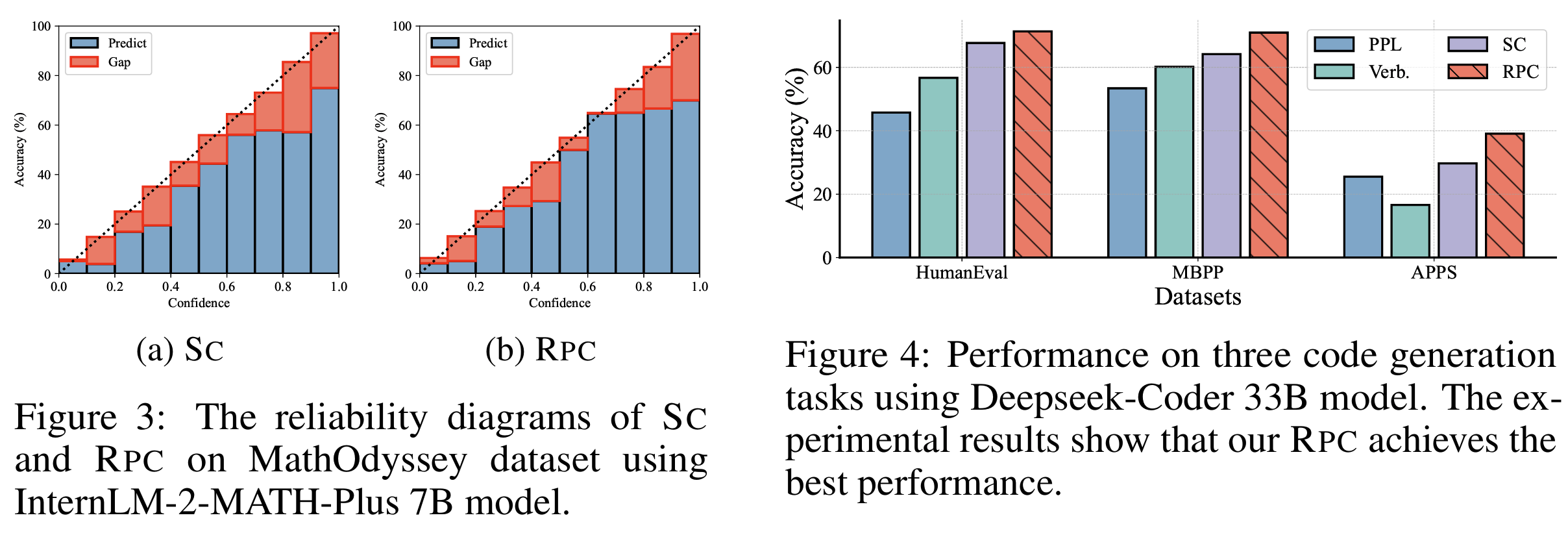

- Figure 3 (reliability diagram): RPC의 predicted confidence가 실제 accuracy에 훨씬 잘 정렬됨

- Additional Results

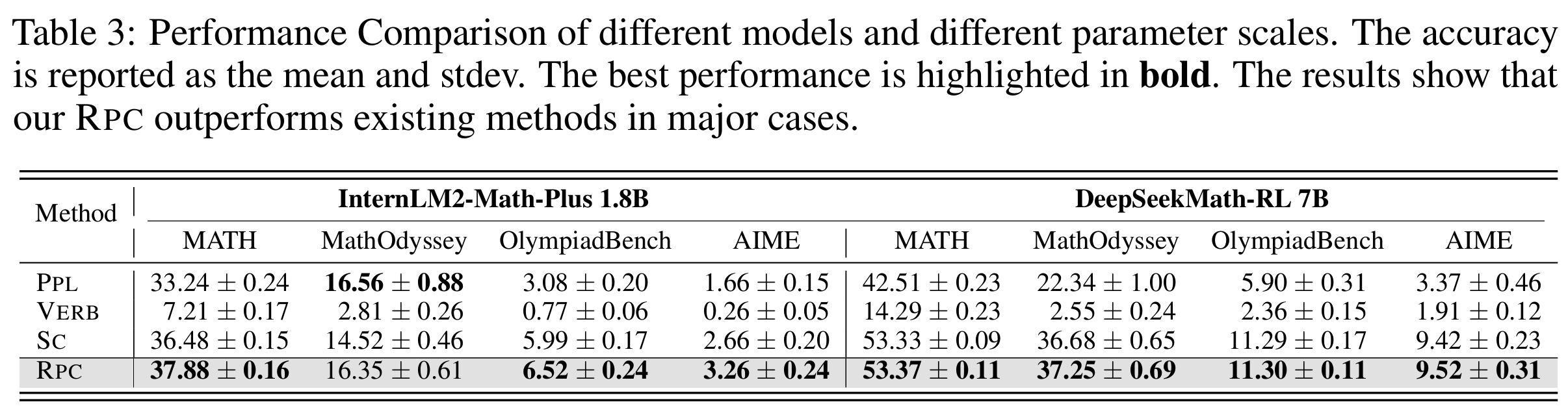

- Figure 4: 코드 생성 3종 (HumanEval, MBPP, APPS)에서도 모든 baseline 상회

- R1 thinking model (DeepSeek-R1-Distill-Qwen-7B)에서도 RPC 효과 유지 (Table 5)

- ESC, BoN+reward model 등 advanced baseline과 결합해도 일관된 성능 향상 (Tables 6, 7)

- 높은 sampling temperature (1.1, 1.3)에서도 RPC robust; SC는 고온에서 estimation error 증가로 성능 저하

- RQ1 (Efficiency): Table 1 — SC의 best 성능을 달성하는 데 필요한 최소 샘플 수 비교

Personal note. 이론적으로는 무엇이 다른가에 대한 답을 처음으로 제시했다는 게 이 논문의 핵심 기여같기는 한데, 가령 Estimation Error (수렴 속도) vs. Model Error (집계 방식)라는 두 축으로 정리되니까 조금 희미하던 confidence estimation 연구에 대한 컨셉이 전구 불 밝히는 기분이 들긴했습니다. 다만 말씀렸다시피 이론적 논증을 잘 밝힌 것 치고는 상대적으로 제안 방법이 또렷한 느낌은 받지 못했습니다.